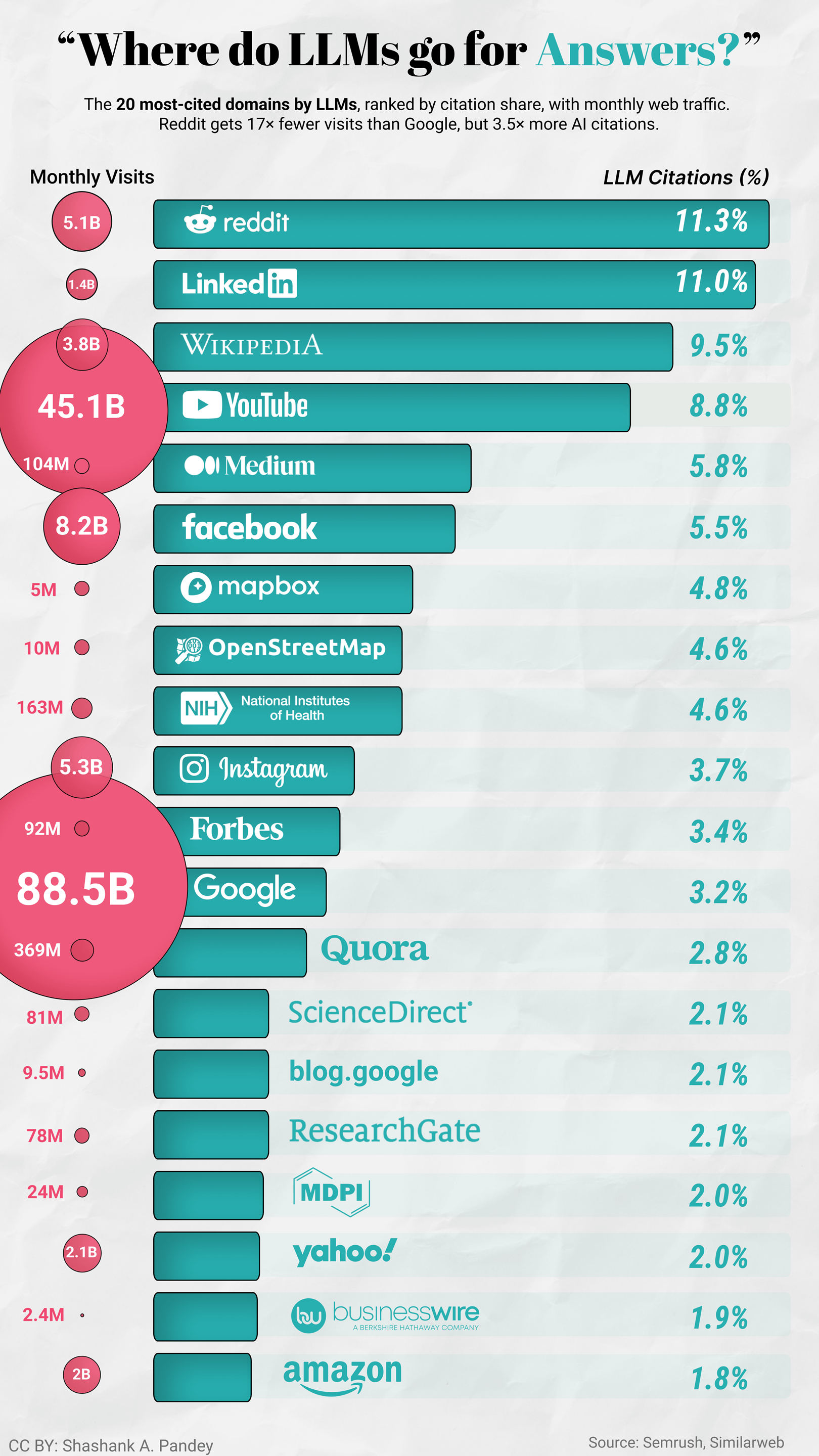

Reddit and LinkedIn together hold 22% of all LLM citations. More than Wikipedia, YouTube, and NIH combined.

That's not random. "I tried both for six months and here's what broke" is a better training signal than a listicle. LLMs seem to weight first-person experience heavily, which means the content SEO has historically undervalued is exactly what AI search favours.

The finding that caught me off guard: Mapbox and OpenStreetMap in the top 10. Neither is a content site. Both are geospatial infrastructures. My read is that this reflects AI agents increasingly needing to interact with the physical world: routing, geocoding, and location lookups. If that's right, LLM citation share might be one of the earliest visible signals of where agent tool-use is concentrating.

The other thing worth sitting with: four sites, NIH, ScienceDirect, ResearchGate, and MDPI, account for roughly 8.9% of total LLM citations. That's the entire academic and scientific credibility layer for AI systems making health and medicine claims. That's thin.

Worth keeping in mind that this describes maybe 5% of current search behaviour. Whether these patterns hold as adoption grows is genuinely unclear.

Data: https://www.semrush.com/blog/linkedin-ai-visibility-study/

Tool: Tableau + Figma

by savage2199

7 Comments

The takeaway I keep coming back to is that citations are basically a proxy for what agents can reliably ground on. First-person debugging writeups and “here is the exact workflow” posts are gold for tool-using agents. Also agree the geospatial infra showing up is a wild tell. I have been tracking similar agent/tool-use trends here: https://www.agentixlabs.com/blog/

Nice write-up, Shashank. What’s the source for the breakdown?

Also is this all major LLMs?

I have been using “^(*thing I want to find*) Reddit” when googling, that I remember I had scroll to the 2nd google page to find the first reddit result

I don’t know all this but when I search in brave for something I get majority answers linked to reddit.

No wonder the majority of answers are wrong

So all we have to do is flood reddit with wrong answers only?

> The finding that caught me off guard:

Holy AI taking about itself. Fucking hell.